- Artificial Intelligence is successfully decoding complex brain signals, translating imagined thoughts and attempted speech into real-time text.

- Pioneering research from Stanford University and Japan is offering a new voice to individuals with paralysis and neurodegenerative diseases.

- These advanced Brain-Computer Interfaces (BCIs) utilize sophisticated machine learning algorithms to interpret neural activity.

- The technology is rapidly approaching commercialization, promising to revolutionize communication and human-computer interaction.

The Genesis of Brain-Computer Interfaces (BCIs)

Brain-Computer Interfaces (BCIs) represent a direct communication pathway between the brain and an external device. While the concept might seem futuristic, its roots trace back decades. As early as 1969, neuroscientist Eberhard Fetz demonstrated that monkeys could manipulate a meter using single neuron activity. Later, Jose Delgado even remotely controlled an enraged bull's behavior. For years, BCIs have excelled at decoding signals for movement, enabling control over prosthetic limbs or cursors. However, the decoding of complex thoughts, particularly speech, proved to be a far more intricate challenge.

AI's Role in Speech Decoding Evolution

The true acceleration in speech decoding BCIs began with the integration of Artificial Intelligence, specifically machine learning. These powerful algorithms are adept at recognizing intricate patterns within vast, disparate datasets. In the context of the brain, AI is trained to identify neural activity patterns associated with specific phonemes – the fundamental building blocks of language. This is akin to smart assistants interpreting sound waves, but here, the AI interprets the electrical 'crackle' of neurons.

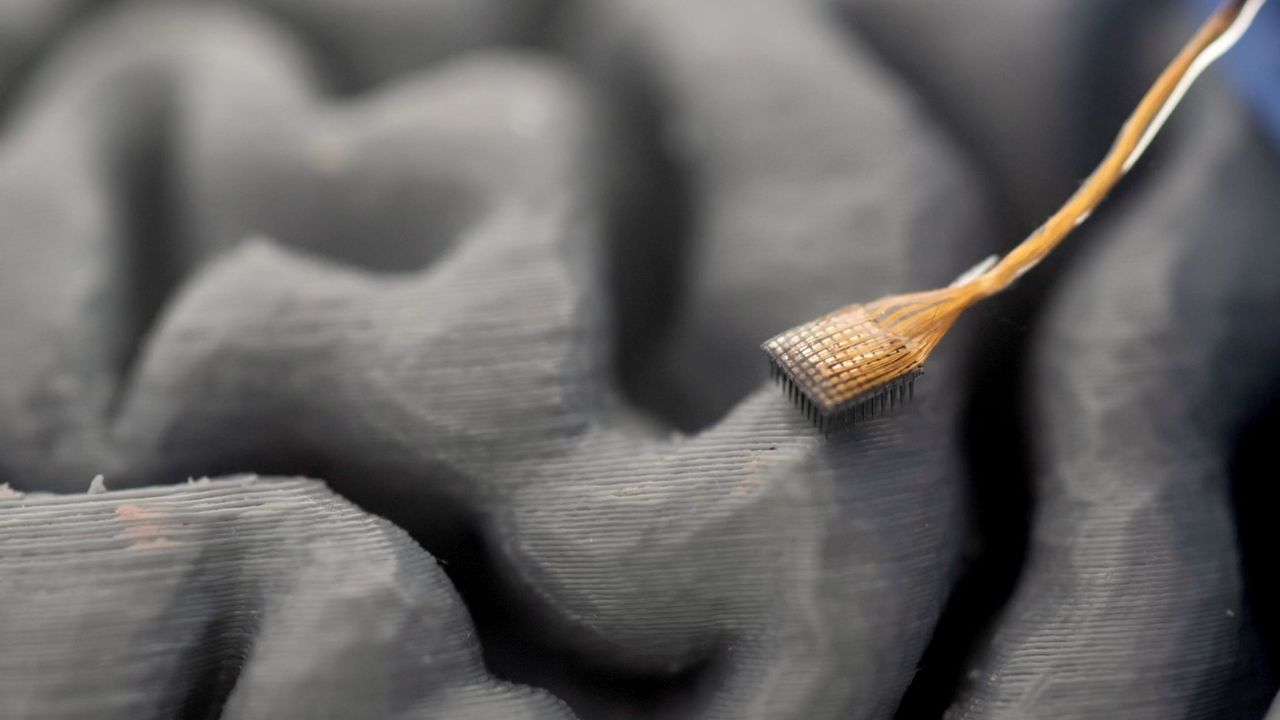

Early breakthroughs demonstrated impressive capabilities. In 2021, Stanford researchers enabled a quadriplegic man to 'write' 18 words per minute by picturing himself drawing letters. More recently, in 2024, Maitreyee Wairagkar's lab achieved 32 words per minute with 97.5% accuracy by translating the attempted speech of an ALS patient directly into text. These methods typically involve surgically implanted microelectrode arrays in the motor cortex, interpreting the brain signals that would normally precede physical speech.

Decoding "Inner Speech": The Latest Frontier

While impressive, 'attempted speech' requires effort. The latest breakthrough from Stanford University pushes the boundary further, exploring the decoding of inner speech – the words we think to ourselves without vocalizing or even attempting to vocalize. In a study involving participant T16, a woman paralyzed by a stroke, researchers successfully translated her internal monologue into text on a screen. This involved placing electrode arrays into a lobe at the front of her brain and using AI to interpret neural signals as she merely imagined saying words.

For tasks involving imagining a sentence, the technology achieved an accuracy rate of up to 74% in real-time. While accuracy decreased for more spontaneous inner speech tasks, and was largely 'gibberish' for open-ended prompts like 'think about your favorite quote,' this represents a monumental leap towards a truly thought-to-text interface, moving beyond the physical act of attempted speech.

Specs & Data: BCI Speech Decoding Milestones

| Development Year | Research Team/Method | Input Type | Output Metric | Accuracy |

|---|---|---|---|---|

| 2021 | Stanford University | Imagining drawing letters | 18 words per minute | N/A (proof-of-concept) |

| 2024 | Wairagkar's Lab | Attempted speech | 32 words per minute | 97.5% |

| 2025 (Aug) | Stanford University | Imagined sentences (inner speech) | Real-time text | Up to 74% |

| 2025 (late) | Japan (Mind Captioning) | Seeing/picturing objects | Detailed descriptions | High (non-invasive) |

Market Impact: Reshaping Communication and Interaction

The market impact of these BCI advancements is profound and far-reaching. Immediately, they offer an unprecedented lifeline for millions suffering from paralysis, 'locked-in' syndrome, or neurodegenerative diseases like ALS, providing them with a direct means of communication. Beyond assistive technology, these breakthroughs pave the way for a radical transformation of how humans interact with technology and even with each other.

The promise of commercialization is already evident, with companies like Elon Musk's Neuralink actively developing brain chips to bring this technology out of the lab and into mainstream use. This could lead to a new era of hands-free, voice-free control over devices, potentially enhancing productivity and accessibility across various industries. However, it also raises critical discussions about privacy, ethics, and the very definition of human interaction in a world where thoughts can be directly translated.

The Verdict: A Glimpse into the Future of Mind-Tech Synergy

The ability of AI to decode human thought, moving from interpreting physical actions to the nuances of inner speech, marks a monumental step in human-computer interaction. While limitations persist, particularly with the accuracy of spontaneous thought translation, the progress made by Stanford and other researchers is nothing short of revolutionary. This technology holds immense promise for restoring communication and agency to those who have lost it, and stands as a testament to the transformative power of AI when fused with neuroscience. The future of communication may well be less about speaking or typing, and more about thinking.